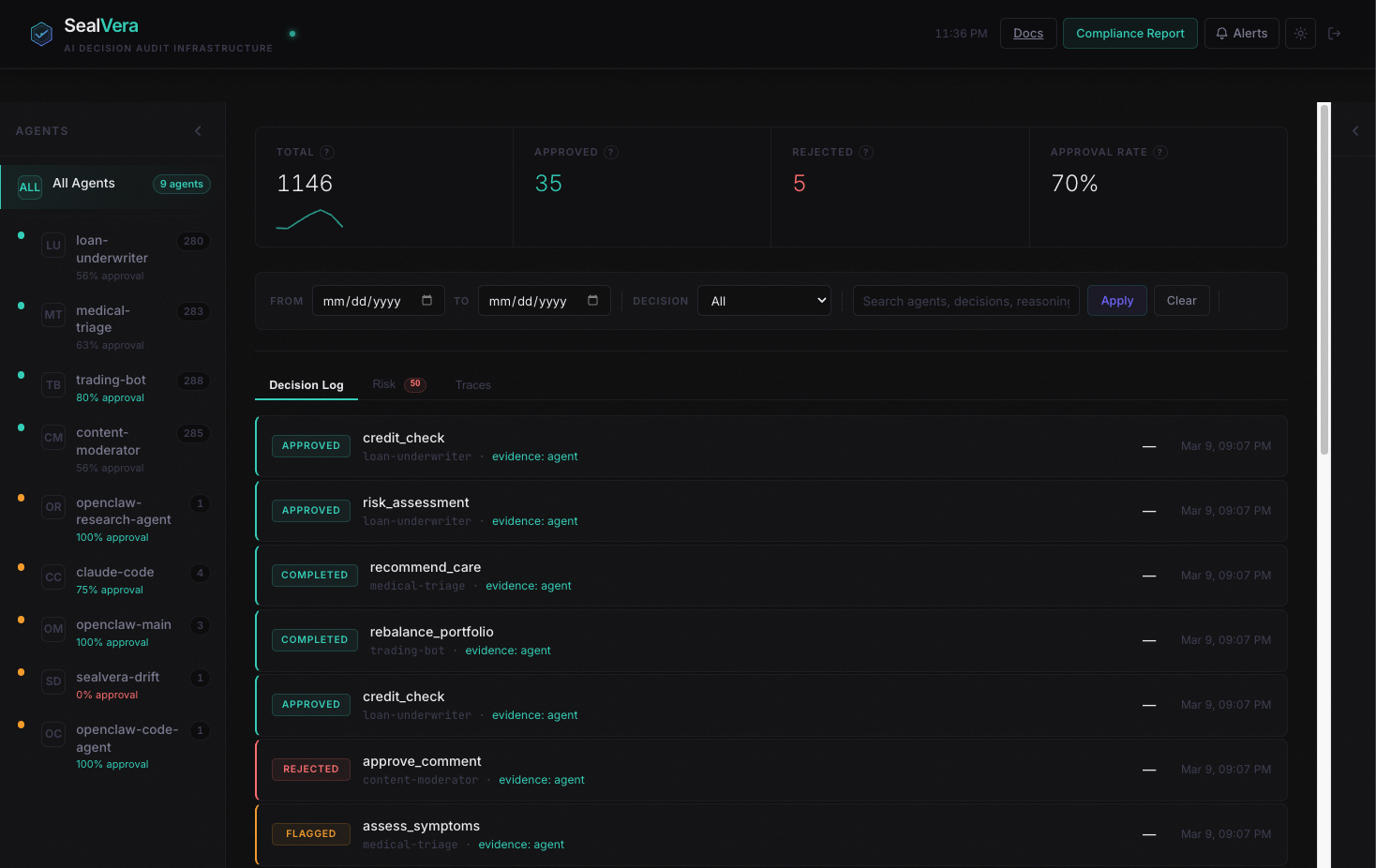

Not another dashboard.

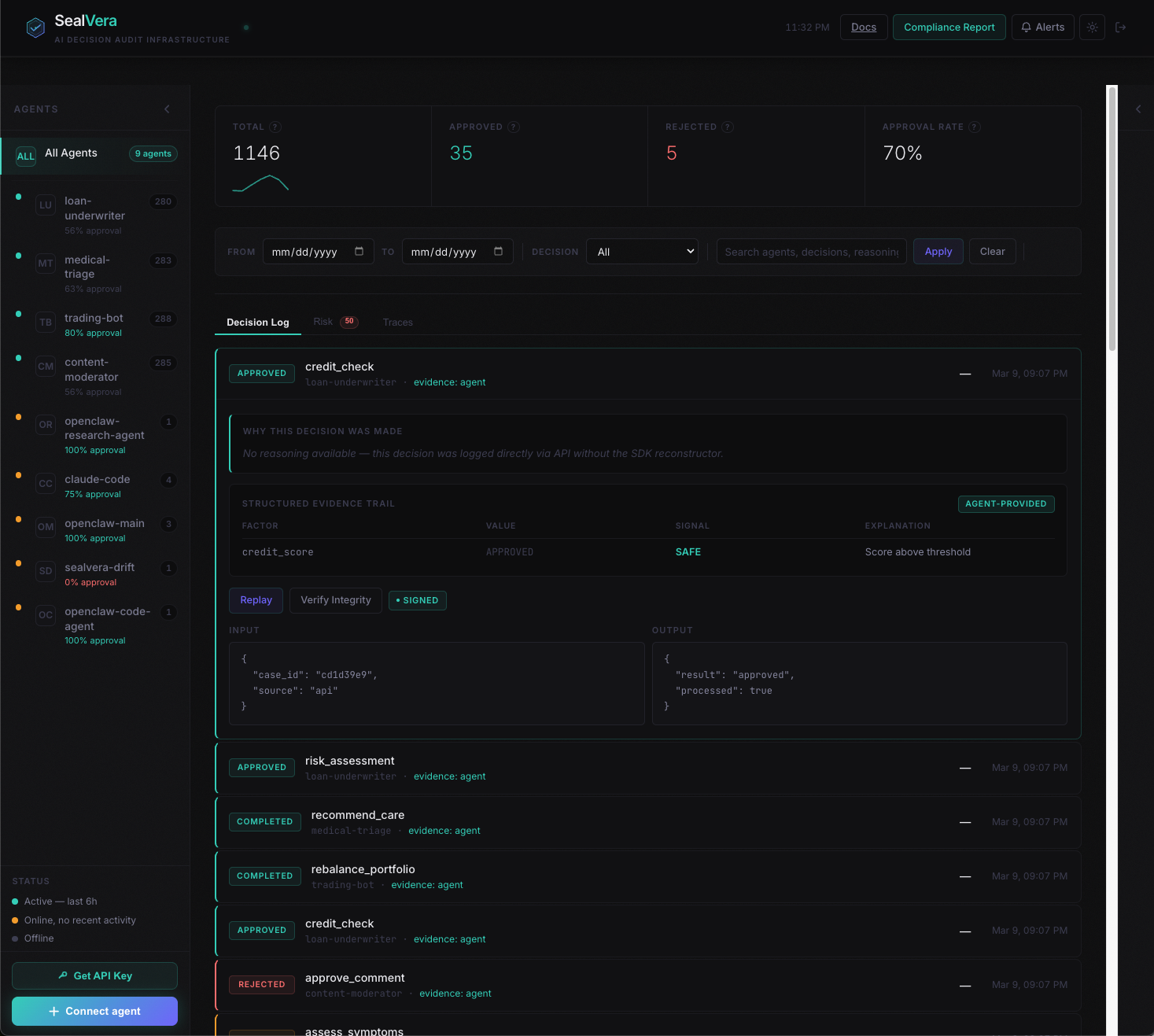

A tamper-proof log of every AI decision.

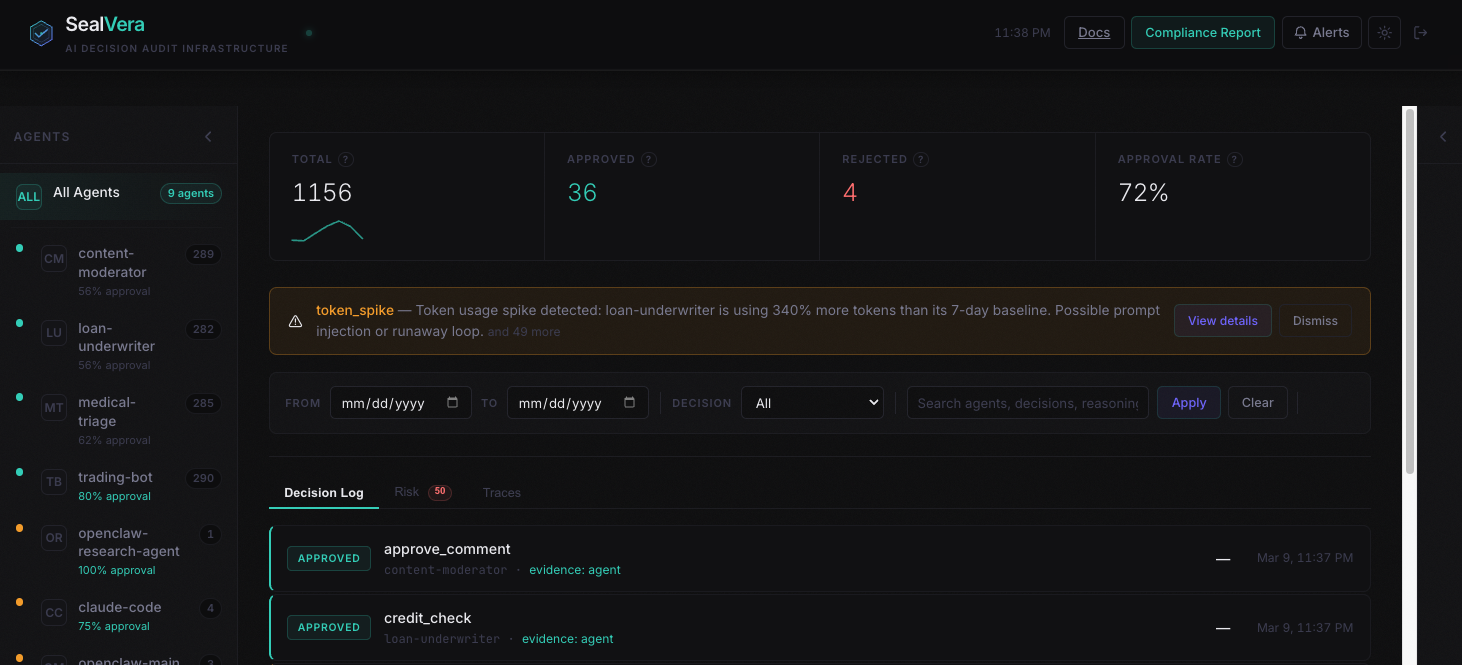

SealVera keeps a tamper-proof, append-only record of every decision your agent makes so when an auditor, lawyer, or customer asks "why did your agent do that?" you can answer with evidence, in order, without gaps, and without anyone editing the record after the fact.